Moral Machines: Teaching Robots Right From Wrong

Wendell Wallach

This article was adapted from a review presented by author, Wendell Wallach, of his book,

“Moral Machines: Teaching Robots Right from Wrong”,

during his attendance of the 4th Annual Colloquium on the Law of Futuristic Persons, December 10, 2008,

at the Florida Space Coast Office of Terasem Movement, Inc.

I was specifically asked to read from the book I co-authored with Colin Allen [1], “Moral Machines” [2] and we’ll just cover a couple pages today, but first I’m going to start with the beginning of the section called “Tomorrow’s Headlines.”

“Robots March on Washington Demanding Their Civil Rights”

“Terrorist Avatar [3] Bombs Virtual Holiday Destination”

“Nobel Prize in Literature Awarded to IBM’s Deep-Bluedora”[4]

“Genocide Charges Leveled at FARL (Fuerzas Armadas Roboticas de Liberacion)”

“Nanobots [5] Repair Perforated Heart”

“VTB (Virtual Transaction Bot) Amasses Personal Fortune in Currency Market”

“UN Debates Prohibition of Self-Replicating AI”[6]

“Serial Stalkers Target Robotic Sex Workers”

“Are these headlines that will appear in this century or merely fodder for science fiction writers? In recent years, an array of serious computer scientists, legal theorists, and policy experts have begun addressing the challenges posed by highly intelligent (ro)bots [7] participating with humans in the commerce of daily life. Noted scientists like Ray Kurzweil [8] and Hans Moravec [9] talk enthusiastically about (ro)bots whose

intelligence will be superior to that of humans, and how humans will achieve a form of eternal life by uploading their minds into computer systems. Their predictions of the advent of computer systems with intelligence comparable to humans around 2020-50 are based on a computational theory of mind and the projection of Moore’s law [10] over the next few decades. Legal scholars debate whether a conscious AI may be designated a "person" for legal purposes, or eventually have rights equal to those of humans.

Policy planners reflect on the need to regulate the development of technologies that could potentially threaten human existence as humans have known it. The number of articles on building moral decision-making faculties into (ro)bots is a drop in the proverbial bucket in comparison to the flood of writing addressing speculative future scenarios."

That section does not introduce one of the earlier chapters in the book. In fact, it’s the introduction to the very last chapter. It is this last chapter, "Dangerous, Rights, and Responsibilities," which touches upon some of the issues that I think are going to be of particular concern for this audience. I’ll read a little bit more from that chapter, but first let me give you a sense of the context of the book in which these topics appear.

Moral Machines is the first book to map a new field of inquiry which is variously known as machine ethics, machine morality, artificial ethics, computational ethics, friendly AI, and robo-ethics. The focus of that field is the prospect for implementing moral decision making faculties within artificially intelligence systems.

The field poses a series of questions. The first one is: Do we need robots and computers making moral decisions? When and where? For the rest of this talk I’m going to collectively refer to bots moving within computer systems as well as physically embedded robots as robots.

In Moral Machines we argue that there is a need to begin thinking right now about robots making moral decisions, because we are already moving into realms where the complexity of artificial entities is such that the designers and programmers can no longer predict how the systems will function with new inputs. Artificial entities are already making decisions which effect humans for good or bad.

So we need to begin extending the engineers’ concern with making the systems they build safe into having those systems make explicit ethical decisions - initially in very limited areas, but one question is how much that could that expand in the systems having higher order decision making faculties.

A third question addressed is: Are robots the kinds of entities capable of making moral decisions? There has been a lot of discussion throughout history about whether consciousness, free will, emotions, or other higher order mental and cognitive abilities are necessary to be able to make moral decisions. How will these be instantiated in robotics? Can they be built into robots? Yes, some people are predicting a technological singularity, but how do we get from here to there?

The first third of Moral Machines addresses those questions, but the bulk of the book looks at the problem of how to make ethics computationally tractable? How can ethics be turned into something that could actually be implemented within the artificial technologies that we have today or that we can anticipate within the very near future? It isn’t enough to project that we will eventually have human level AI. How do we get from here to there?

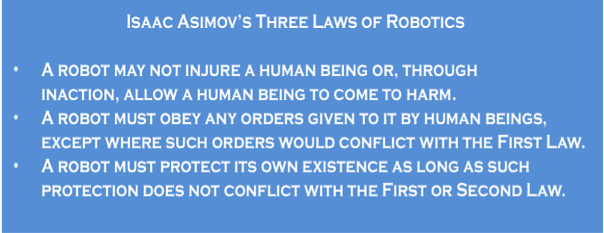

In what ways is ethics computationally tractable? We break that question down into two approaches. One we call top-down approaches, which is the attempt to implement an ethical theory, such as the Ten Commandments, Utilitarianism or even Asimov’s Three Laws [11]; how could you represent one of these theories in an artificial intelligent system so that it could actually make the kind of decisions that humans could feel comfortable with.

Then there are bottom-up approaches, which are inspired by evolution and developmental psychology. These not so much about having some explicit notion of what is right, good, or just. They are more like the developmental process through which we take a child as it learns about the forms of behavior that are appropriate. The more evolutionarily inspired bottom-up approaches come from the notion that a moral grammar may be the legacy of the evolutionary process. Could we reproduce the evolution of a moral grammar in artificial entities?

Will entities that can reason be able to adequately make moral decisions? This question gives rise to the recognition that additional abilities, which we call super-rational (beyond reason) faculties will be required for artificial agents to be satisfactory moral agent. What about emotions? Are they good or bad? Will robots need some kind of emotional intelligence?

How about consciousness? How about sociability?

How about being embodied in the world of objects and things? How about a theory of mind, which is the capacity to recognize that what's in other people's minds is not the same as what's in yours, and to be able to intuit what their intentions are, what their beliefs are, what their goals are.

I believe what will be fascinating for some of you is that this subject provides a window on research trajectories in affective computing, machine consciousness, and other fascinating areas of research that you might not be aware are going on.

In the last third of Moral Machines we talk about hybrid approaches and about what might be a framework to have human level moral decision making faculties within robotics.

As I mentioned, the last chapter is about the “Dangers, Rights, and Responsibilities”, and that's divided into three sections.

The first is about the Singularity [12] and the challenges of insuring that forms of AI whose intelligence is equal or superior to that of humans will be friendly to human considerations.

The middle section is about liability, moral agency, rights and duties, which I think will be of particular interest to people here today. Can robots be designated moral agents? Most theorists agree, that once we've granted personhood to corporations it's not a great leap to grant personhood to machines, at least for legal purposes. Nevertheless, there will be debates about when robots have crossed thresholds where they will be granted moral agents, or whether we might slip into such a state through no-fault insurance and other mechanisms that pass on de facto [13] responsibility to the machines.

What's been particularly interesting to me lately is the public policy context in which, not only this discussion but the whole broader array of issues surrounding enhancing technology, appears. I'm just going to read you a little bit from the introduction to our discussion about the public policy ramifications of AI.

That section is called, “Embrace, Reject, or Regulate”, which is a phrase I often use in relationship to all of technology and ethics and enhancement technologies specifically. Here it will discussed in relationship to robotics.

"Public policy toward (ro)bots will undoubtedly be influenced by ideas about how to evaluate their intelligence and moral capacities. But political factors will play the larger role in determining the issues of accountability and rights for (ro)bots, and whether some forms of (ro)bot research will be regulated or outlawed.

(Ro)bot accountability is a tricky but manageable issue. For example, companies developing AI are concerned that they may be open to lawsuits even when their systems enhance human safety. Peter Norvig [14] of Google offers the example of cars driven by advanced technology rather than humans. Imagine that half the cars on U.S. highways are driven by (ro)bots, and the death toll decreases from roughly forty-two thousand a year to thirty- one thousand a year. Will the companies selling those cars be rewarded? Or will they be confronted with ten thousand lawsuits for deaths blamed on the (ro)bot drivers?

Norvig's question faces any new technology. It could just as easily be asked about a new drug that reduces the overall death rate from heart disease while directly causing some patients to die from side effects. Just as drug companies face lawsuits, so, too, will (ro)bot manufacturers be sued by (ro)bot-chasing lawyers. Some cases will have merit, and some won't. However, free societies have an array of laws, regulations, insurance policies, and juridical precedents that help protect industries from frivolous lawsuits. Companies pursuing the huge commercial market in (ro)botics will protect their commercial interests by relying on the existing frameworks and by petitioning legislatures for additional laws that help manage their liability.

However, as (ro)bots become more sophisticated, two questions may arise in the political arena. Can the (ro)bots themselves, rather than their manufacturers or users, be held directly liable or responsible for damages? Do sophisticated (ro)bots deserve any recognition of their own rights?

We think that both of these questions are futuristic compared to the aims of this book. Nevertheless, others have already started to discuss them, and we don't want to ignore that discussion entirely.

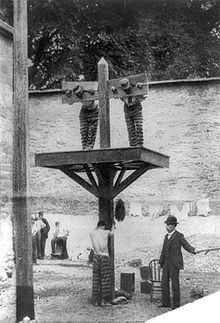

Whipping post New Castle County Jail Delaware 1897 at:

http://en.wikipedia.org/wiki/Pillory

There are many ways agents are held responsible for their actions within legal systems, corresponding to different available means of punishment. Human agents have historically been punished in a variety of ways: through infliction of pain, social ostracism or banishment, fines or other confiscation of property, or deprivation of liberty or life itself. Debates over whether it makes sense to hold (ro)bots accountable for their actions often center on whether any of these traditionally applied punishments make sense for artificial agents. For instance, the infliction of pain only counts as a real punishment for agents that are capable of feeling it. Depriving an agent of freedom only really punishes an agent who values freedom. And confiscation of property is only possible for agents who have property rights to begin with.

If you are convinced that artificial agents will never satisfy the conditions for real punishment, the idea of holding them directly accountable for their actions is a nonstarter. One can believe this and still agree with the main thrust of this book, which is that some facsimile of moral decision making is needed for autonomous systems. Successful AMAs [artificial moral agents] might be constructed even if they could never be held directly responsible for anything, just as artificial chess players can win tournaments even though they never get credit for doing so. [added]

However, you may be among the readers who believe that (ro)bots will eventually reach the point where it would be nothing but human prejudice to deny that they deserve equal treatment under the law. Such a movement is likely to come gradually, and with much disagreement about when the threshold has been crossed. In this respect, calls for (ro)bot rights are likely to mimic the politically significant movement to increase rights for animals. Much of the animal rights movement has focused on protecting the more intelligent species from pain and distress."

Logo of the Great Ape Project at:

http://en.wikipedia.org/wiki/Great_ape_project

The next part of the book goes on and talks a little bit more about the whole development of animal rights and the question of whether robots will or will not ever have pain and distress. We do talk much earlier in the book about the development of affective architecture for robotic systems or whether they will ever have a kind of somatic architecture that allows them to feel pleasure and pain. That is still a big mystery I would say, within the field of robotics.

As many of you are well aware, we have various protocols within research ethics, legal requirements that have to be filled both in using humans in research and in using animals in research. Much of that has to do, particularly with animals, in minimizing the pain or the kind of pain that they will experience without real justification. The question is really what is and what isn't justified?

We are a long way from review boards for research on robotics, but certainly if we move in the direction of somatic architecture for robots, we are going to get calls for institutional review boards for robotics. There are some people who argue, such as Thomas Metzinger [15], a famous German philosopher, against there ever being experimentation that would introduce that kind of architecture into robotics. So that will be, again, not just a legal question, but a public policy question.

Let me just finish up with this little section.

"Regulating the treatment of (ro)bots in research is not the same as granting legal rights to them, but the establishment of protections provides a toehold for the assignment of rights. The progression toward rights for (ro)bots is likely to be slow. (Ro)bots may be programmed to demand energy, information, and eventually education, protection, and property rights, but how is one to evaluate whether they truly desire social goods and services? If a (ro)bot in a plaintive voice begs that you not turn it off, what criterion will you use to decide whether or not this is a plea that should be honored? As systems get more and more sophisticated, fewer and fewer people may question whether it is appropriate to anthropomorphize the actions of a (ro)bot and many people will come to treat the (ro)bots as the intelligent entities they appear to be.

Sexual politics are one frontier where these issues may come to the fore. The adoption of mechanical devices, (ro)bots, and virtual reality by the sex industry is nothing new, and while some are offended by such practices, governments in democratic countries have largely turned away from trying to legislate the private practices of the individuals who use these products. However, other social practices are likely to ignite public debate. Examples are the rights of humans to marry (ro)bots and of (ro)bots to own property. Lester del Rey [16] was the first writer to fictionalize a (ro)bot marrying a human in the classic short story 'Helen O'Loy'" [17] which he published in 1938. Helen, the robotic heroine, not only marries her inventor but later sacrifices herself when her husband dies. In 2007, almost seventy years later, the University of Maastricht in the Netherlands awarded a doctorate to AI enthusiast David Levy [18] for his thesis that trends in the development of robotics and in shifting social attitudes towards marriage will lead to humans perceiving sophisticated robots as suitable marriage partners."

If any of you are interested in his article or his thesis, it's now in a popular book called “Love and Sex with Robots”.

"Given that marriage is an institution legally recognized by the state, legislatures can anticipate debating this possibility. Unlike most other kinds of rights for robots, marriage is an issue that humans will have a direct interest in, and may therefore be among the first rights considered for robots. Initially, presuming a human is granted the right to marry a robot, this might confer only limited rights to the robot. However, over time there would be demands for robots to have the legal right to inherit property, and to serve as surrogates in health decisions involving an incapacitated human spouse.

Long before legislatures consider granting rights to (ro)bots, however, they are likely to be forced to deal with demands to restrict research or even ban outright the development of sophisticated AI systems. Even as the public embraces scientific progress, there is considerable confusion, anxiety, and fear regarding the way future technologies might transform human identify and community.

The difficulty, as we mentioned earlier, will be for legislatures, judges, and public officials to distinguish social challenges that need to be addressed from the issues that are based on speculative projections."

Footnotes

1. Colin Allen - received his B.A. in philosophy

from University College London in 1982 and his Ph.D. in philosophy from UCLA in

1989. He has broad research interests in the general area of philosophy of biology

and cognitive science, and is best known for his work on animal behavior and cognition.

http://www.indiana.edu/~hpscdept/Fac-Allen.shtml June 4, 2010 8:45AM EST

2. Wallach, Wendell and Allen, Colin. Moral Machines: Teaching Robots Right from Wrong. New York: Oxford University Press, Inc., 2009, 189-190. ISBN 978-0-19-537404-9

3. Avatar

- n. 1: the incarnation of a Hindu deity (as Vishnu)

2a: an incarnation in human form b: an embodiment (as of a concept

of philosophy) often in a person 3: a variant phase or version

of a continuing basic entity 4: an electronic image that represents

and is manipulated by a computer user (as in a computer game or an online shopping

site).

Merriam-Webster's Collegiate Dictionary.

Massachusetts: Merriam-Webster Incorporated, 2005: 85.

4. Deep-Bluedora (made up combination of Deep Blue and Eudora (email software) - (Deep Blue) - chess-playing computer developed by IBM. On May 11, 1987, the machine won a six-game match by two wins to one with three draws against the world champion, Garry Kasparov. http://en.wikipedia.org/wiki/Deep_Blue_(chess_computer)#Deep_Blue_versus_Kasparov May 4, 2010 3:00PM EST

5. Nanorobotics - the technology of creating machines or nano robots at or close to the microscopic scale of a nanometer (10-9 meters). http://en.wikipedia.org/wiki/Nanorobotics May 4, 2010 3:20PM EST

6. AI

- n. (1956) 1: a branch of computer science dealing with

the simulation of intelligence behavior in computers 2: the capability

of a machine to imitate intelligent human behavior.

Merriam-Webster's Collegiate Dictionary.

Massachusetts: Merriam-Webster Incorporated, 2005: 70.

7. (Ro)bot - a term the authors coined to encompass both physical robots and software agents (bots).

8. Ray Kurzweil - born February 12, 1948) is an American inventor and futurist. http://en.wikipedia.org/wiki/Ray_Kurzweil May 4, 2010 3:30PM EST

9. Hans Moravec a Research Professor in the Robotics Institute of Carnegie Mellon University. http://www.frc.ri.cmu.edu/~hpm/hpmbio.html May 4, 2010 3:34PM EST

10. Moore's Law

- n. (1980) 1: an axiom of microprocessor development usu. holding that processing power power doubles about every 18 months esp. relative to cost or size.

Merriam-Webster's Collegiate Dictionary.

Massachusetts: Merriam-Webster Incorporated, 2005: 806.

11. Asimov's Three Laws (of Robotics) - a set of three rules written by Isaac Asimov, which almost all positronic robots appearing in fiction must obey. http://en.wikipedia.org/wiki/Three_Laws_of_Robotics May 4, 2010 3:38PM EST

12. Singularity - n. 1:The quality or condition of being singular. 2: A trait marking one as distinct from others; a peculiarity. 3: Something uncommon or unusual. 4: Astrophysics. A point in space-time at which gravitational forces cause matter to have infinite density and infinitesimal volume, and space and time to become infinitely distorted. 5: Mathematics. A point at which the derivative does not exist for a given function but every neighborhood of which contains points for which the derivative exists. Also called singular point. http://www.answers.com/topic/singularity May 4, 2010 3:50PM EST

13. De facto

- [Law Latin "in point of fact"] 1: Actual; existing in fact; having effect even though not formally or legally recognized

2: Illegitimate but in effect.

Black's Law Dictionary Third Pocket Edition.

Minnesota: West Publishing Company, 2006: 188.

14. Peter Norvig Director of Research at Google, Inc. http://www.norvig.com/bio.html May 4, 2010 3:59PM EST

15. Thomas Metzinger Professor of Philosophy, Director of the Theoretical Philosophy Group at the Department of Philosophy of the Johannes Gutenberg-Universität Mainz. http://www.philosophie.uni-mainz.de/metzinger/ May 4, 2010 4:05PM EST

16. "Helen O'Loy" - a 1938 science fiction short story by Lester del Rey. It is about two men who buy a beautiful robot housekeeper and the all too life-like problems they encounter with her. http://bestsciencefictionstories.com/2008/12/21/helen-oloy-by-lester-del-rey/ May 4, 2010 4:10PM EST

17. David Levy (born 14 March 1945, in London), is a Scottish International master of chess, a businessman noted for his involvement with computer chess and artificial intelligence, and the founder of the Computer Olympiads and the Mind Sports Olympiads. He has written more that 40 books on chess and computers. http://en.wikipedia.org/wiki/David_Levy_(chess_player) May 4, 2010 4:15PM EST

18. Love and Sex with Robots - a book about the future development of robots that will have sex with humans. The book claims that this practice will be routine by 2050. http://en.wikipedia.org/wiki/Love_and_Sex_With_Robots May 4, 2010 4:25PM EST

Photo & Bio

Wendell Wallach, WW Associates

Wallach is a consultant, ethicist, and scholar at Yale Universitys Interdisciplinary Center for Bioethics. He was a founder and president of two computer consulting companiesFarpoint Solutions and Omnia Consulting Inc.serving PepsiCo International, United Aircraft, and the State of Connecticut, among other clients. Wallach is currently writing a book on the societal, ethical, and public policy challenges arising from technologies that enhance human faculties.