The Emotion Machine: Commonsense Thinking, Artificial Intelligence, and the Future of the Human Mind

Marvin Lee Minsky, Ph.D.

Page 4 of 4

Finally, the book is The Emotion Machine. Everybody thinks thinking is just logic and it is not interesting but emotions are very complicated, deep, inexplicable, and mysterious and to me it is exactly the opposite.

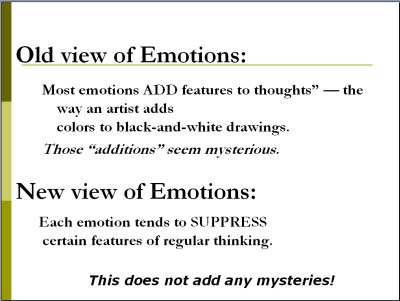

The classical view is that there is something called rational thought and it's pretty simple and logical and you have the facts and maybe inferences from them and blah, blah, blah. The mysterious things about people are feelings and emotions and loves and hates and blah, blah, blah. We have all come to grow up in a culture that believes that emotions are additional mysterious features of thoughts just like we make things more lively just as adding color improved black and white paintings, unless you're an Escher; you realize that colors are just silly. That is the great mystery and people worry about it, and my view is exactly the opposite.

GLORIA RUDISCH: (Reading from The Emotion Machine, Pages 9 and 10)

What is love and how does it work? Is this something that we want to understand, or is it one of those subjects that we don't really want to know about? Here, our friend Charles attempted to describe his latest infatuation.

"I've just fallen in love with such a wonderful person. I scarcely think about anything else. My sweetheart is unbelievably perfect, indescribable beauty, flawless character, and incredible intelligence. There's nothing I wouldn't do for her."

On the surface, such statements seem positive; they are all composed of superlatives. But note that there's something strange about this: Most of these phrases of positive praise use syllables like "un," "less," and "in" which show that they are really negative statements describing the person who's saying them.

Wonderful. Indescribable.

(I can't figure out what attracts me to her.)I can scarcely think of anything else.

(Most of my mind has stopped working.)Unbelievably perfect. Incredible.

(No sensible person believes such things.)She has flawless character.

(I've abandoned my critical faculties.)There is nothing I wouldn't do for her.

(I've forsaken most of my usual goals.)Our friend sees all this as positive. It makes him feel happy and more productive, and relieves his dejection and loneliness. But what if most of these pleasant effects result from his success at suppressing his thoughts about what his sweetheart actually says:

"Oh, Charles, a woman needs certain things. She needs to be to loved, wanted, cherished, sought-after, wooed, flattered, cosseted, pampered. She needs sympathy, affection, devotion, understanding, tenderness, infatuation, adulation, idolatry. That isn't much to ask, is it, Charles?" Thus, love can make us disregard most defects and deficiencies, and make us deal with blemishes as though they were embellishments -- even when, as Shakespeare said, "We still may be partly aware of them:

"When my love swears that she is made of truth, I do believe her, though I know she lies."

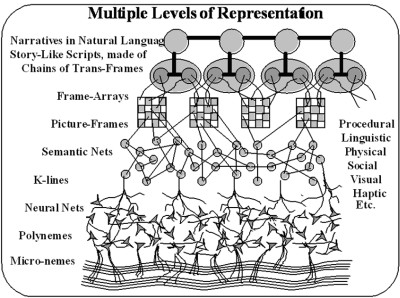

The picture of the mind that I propose is: think of the brain as 400 different computers or databases. We have tens of thousands of neuroscientists who are trying to find out what these are, and I wish they’d read my book so they would have a better idea what they're supposed to do. If you turn these on all at once, all the brain centers, they would conflict for resources and they would get in the way of each other and there would be in an enormous traffic jam, and for all we know that happens to some people sometimes. But the idea of this theory is that you have a system which switches among different mental states which is what this critic selector idea is.

There is some higher part of the brain that recognizes that you're facing a problem and turns on a way to think. What I mean by "a way to think" is simply turning on a certain collection of brain centers and turning off others. What happens when you're angry? It's not that you’ve added some mysterious thing to rational thought, it's that you've turned off all sorts of things that allow you to look ahead. You've turned off most of your social diplomacy systems, you turned off long-range plans where you compared different actions, and you do turn on some things that aren't usually on which cause you to act more rapidly. Most of the secret of acting rapidly is to stop other processes endowed in negotiation and so forth. When you're afraid it does similar things and turns on a different set. When you're hungry, that's a built-in system which turns on some things and most others off. Of course, there are some things like having your breathing continued that are on almost all the time, unless you are swimming under water or something and learn to affect some of those.

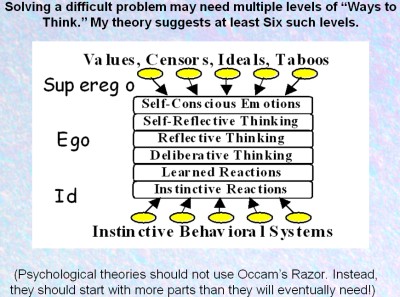

It is the same on the highest levels. In this diagram you are facing a problem. One thing you can do is split it into parts or make it a trial and error search or make an analogy or go back to school or whatever. I conclude that this has to happen on about six levels. Some readers argue, do you really need to distinguish between self-reflective thinking and reflective thinking? The answer is probably not.

The reason I think most psychological theories got stuck was because of physics envy again. Namely, there is an idea that to solve a problem you should find the simplest possible theory, it's called Occam's Razor [1]. If you know that the brain has evolved 400 different centers and you have a theory that talks about six processes, you've got to be wrong. All psychologists who have really good looking theories must be totally out of the park because it's expensive to keep a brain. It uses twenty percent of your calories even neurally, and that would be enough -- evolution depends a lot on energy.

If one creature is two percent more efficient than another then there will be none of the others left in a hundred generations. Did you hear something like you only use ten percent of your brain? If you use twenty percent; you'd probably be a manic traffic jam. No one knows, but that's the main idea of The Emotion Machine theory, and I am trying to get a group of researchers together to work on this architecture. I speculate it will take several years and I haven't been able to get any funding for it yet, but if I don't maybe I could threaten to do it myself by writing a big program, I might write more essays. I am hoping that if we go in this direction it will take five or six years to get a working prototype. We have a small one with the first three levels that was done by a brilliant student of ours named Push Singh [2], who unfortunately died last year, and no one has appeared with the same set of amazing abilities.

What I suspect is that if we do this project we might get a prototype of this thing that's pretty smart, does some of the things that a four-year-old does in five or six years. Then if this architecture works, it means that other people can contribute things just by putting in the right critics and selectors and maybe the thing would grow. There is no predicting how long. People are always saying, "How long will such a thing take?" Herbert Simon [3] in 1970 thought that something like human level AI might appear in ten years. In 1970, he predicted that there would be a world champion chess program in ten years.

He thought a lot of people would work on it. When you see these predictions -- Kurzweil's [4] predictions are pretty good, appear to be looking backwards, but you can't tell whether a field will catch fire or not. Ray is quite careful about saying what kinds of systems are more or less likely to obey his Law of Accelerated Returns.[5] My fear is that AI won't until we get something like this emotion machine architecture working so that a lot of people can contribute to it. AI's growth has been very linear or less so because each system is so square that another person cannot add to it, you can't put two together and there hasn't been any high level architecture for combining different methods. We will see what happens. I am sure that if the humans survive the next half century we'll start seeing machines whose rights to citizenships will be serious matter for discussion.

The one thing I would like to add is that when you do make a smart machine the first two or three hundred versions of it will, in fact, be so buggy that you better not give them any rights, no matter how convincing they are. That is known with all large programs that it takes years to get rid of the bugs.

Footnotes

1. Occam's Razor - (sometimes spelled Ockham's razor) is a principle attributed to the 14th-century English logician and Franciscan friar William of Ockham. The principle states that the explanation of any phenomenon should make as few assumptions as possible, eliminating those that make no difference in the observable predictions of the explanatory hypothesis or theory.

http://en.wikipedia.org/wiki/Occam's_Razor July 18, 2008 2:48PM EST

2. Push Singh – (1972 – 2006) a Ph.D. student in the department of computer science and electrical engineering at MIT. His research goal was to understand how minds work, so that he can construct a machine that thinks. He was building a "unified theory of non-unified theories of cognition" so that he could draw on a diversity of ideas to help him in his purpose.

http://www.kurzweilai.net/bios/... July 18, 2008 3:00PM EST

3. Herbert Alexander Simon (June 15, 1916 – February 9, 2001) - was an American political scientist whose research ranged across the fields of cognitive psychology, computer science, public administration, economics, management, philosophy of science and sociology and was a professor, most notably, at Carnegie Mellon University.

http://en.wikipedia.org/wiki/Herbert_Simon July 18, 2008 3:05PM EST

4. Raymond Kurzweil - (born February 12, 1948) is an inventor and futurist. He has been a pioneer in the fields of optical character recognition (OCR), text-to-speech synthesis, speech recognition technology, and electronic keyboard instruments. He is the author of several books on health, artificial intelligence, transhumanism, the technological singularity, and futurism.

http://en.wikipedia.org/wiki/Ray_Kurzweil July 18, 2008 3:09PM EST

5. Law of Accelerating Returns - An analysis by Ray Kurzweil (Id.) which he explains as, “[T]he inherent acceleration of the rate of evolution, with technological evolution as a continuation of biological evolution.”

Kurzweil, Ray. The Singularity Is Near. New York: Viking, 2005: 7.

Bio

Marvin Lee Minsky, Ph.D. has made many contributions to AI, cognitive psychology, mathematics, computational linguistics, robotics, and optics. In recent years he has worked chiefly on imparting to machines the human capacity for commonsense reasoning. His conception of human intellectual structure and function is presented in The Society of Mind which is also the title of the course he teaches at MIT.

Marvin Lee Minsky, Ph.D. has made many contributions to AI, cognitive psychology, mathematics, computational linguistics, robotics, and optics. In recent years he has worked chiefly on imparting to machines the human capacity for commonsense reasoning. His conception of human intellectual structure and function is presented in The Society of Mind which is also the title of the course he teaches at MIT.