The Emotion Machine: Commonsense Thinking, Artificial Intelligence, and the Future of the Human Mind

Marvin Lee Minsky, Ph.D.

Page 2 of 4

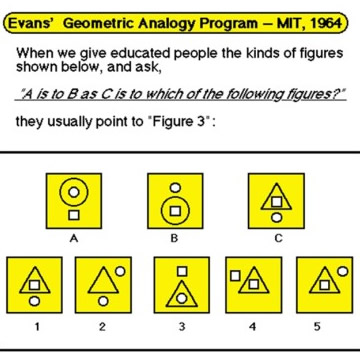

You see the evolution going backwards here because the first programs are doing things that only college students do, and then you get to high school students and my favorite one: A is to B as C is to which of the following?

Almost everybody past the tenth grade gets the answer, but you see that in 1964 we had programs that were doing a little bit of reasoning by analogy; very little of that forty years later; why? What happened in artificial intelligence was that people said here are these different things but I want a unified theory. I call it physics envy; it's like, this guy writes a program to do this and somebody writes one to do that and so forth, and it will never end, no generality.

They were right. The answer is let's invent a way of general intelligence, a uniformed system that will maybe start as a baby and learn or maybe it will collect enough statistics so that as your data base gets bigger it will know everything or maybe you collect common sense statements from the Web until finally it knows the answer to every question.

What happened around 1980 is that this line of progress actually disappeared and it was replaced by people who said I have a theory of learning; I'll make a genetic system that mutates and selects the survivors and so forth. Genetic programs were good at solving certain kinds of problems. Some of them designed circuits that no one ever thought of. Statistical programs turned out to be good at, for example, optical character recognition. How do you tell a P from a Q? The probability depends on what happens in the lower right-hand corner, whether you can see a little thing sticking out from what would otherwise be a P and so forth. Today we have thousands and maybe tens of thousands of particular programs based on one of ten or twelve of what I call fads. Each of them is pretty good but none of them, for example, can do common sense reasoning and make analogies and so forth.

The problem today is that we have lots of programs that are much better than people that, for example, schedule airline reservations or -- in fact, Ray Kurzweil has a company that he claims does better than brokers at investing in the stock market and I don't suppose he will tell us exactly how that works.

There is no machine that can look around and tell a dog from a cat or recognize ordinary things. There are programs that can find cars in parking lots, but if you aim it at any other scene it will find cars there, too, with lower probabilities. No machine can make a bed. No robot can put a pillow in a pillow case, my favorite example. I don't know if humans can do it without holding the pillow in your mouth. Worst of all, there is no program that can read a simple story like a fairy tale and answer questions about what it might possibly mean.

You can use a string to pull something, but not push; people go indoors when it rains; it's hard to stay awake when you're bored; if you break something, you have to pay for it; it’s hard to understand speech in a noisy place.

Every normal child probably knows about twenty million of these things. Nobody knows exactly how large a typical human common sense knowledge base is, but there is some discussion of it. There are a couple of projects in the world, the most famous one is this CYC Project (pronounced Psych) [1] of Doug Lenat in Austin, Texas and it knows about three or four million little facts about common sense knowledge. That took twenty years to build. We have a project at MIT called Open Mind [2], which knows three or four -- I am not sure how much it knows -- maybe less than CYC. It took only a few months to build because we got tens of thousands of people on the Web to type in stuff. However, we don't know how to use it and there's no problem that can do much common sense reasoning with that database. That is where Artificial Intelligence is now. There is no program that can think the kinds of things that any five or six-year-old can think.

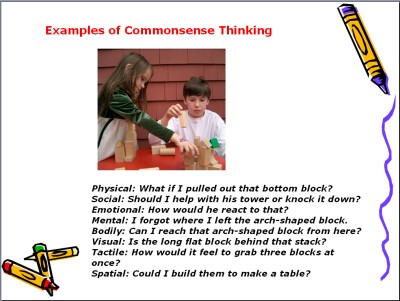

Here is one of my favorite examples: Here are two kids, who are grandchildren of ours, and they are playing with old fashion building blocks.

Anyway, Charlotte is actually watching Myles build -- even though she appeared to be building -- and she is thinking these eight different kinds of thought. They are not just different thoughts; they are in different realms of existence. But what would happen if I pulled out the bottom block? Obviously, then it would fall. Should I help him with this tower or knock it down? You always have that choice. How would he react to it? I forgot where I left the arch-shaped block. She is thinking in different worlds, visual and tactile. How would it feel to grab three blocks at once? You can all do that.

I've had long discussions with John McCarthy [3] who I worked with for many years about when you put your hand in your pocket how do you get your keys. AI people have spent hundreds of thousands of man years failing to do much about vision but virtually nobody has spent any time at all asking how tactile perception works. You can put your hand in your pocket, it's amazing, you can recognize what's there sometimes. The reason why AI is not transhuman yet, or even nearly human, is that most of the researchers have decided that this field is very chaotic.

Let us imitate the physicist. After all, mechanics was a mess until Newton found three laws. Electricity was a mess until Maxwell found four laws for electricity magnetism. That was a mess until Einstein discovered that electricity and magnetism were the same thing and you only needed one law. So wow, look at physics! Granted, these things are hundreds of years apart but unified theories work. Most people who build learning machines try to imitate the way rats and pigeons learned in the great experiments of Pavlov in 1910 or so and Fred Skinner in the 1930s and '40s.

Around 1960 a young man named Edward Fikenbaum [4] developed what are called rule-based systems and these were wonderful ways to write programs. Instead of describing your procedure saying do this, do that; if this happens what is a regular program? It is a sequence of commands with branches depending on conditions. A rule-based system is sort of the opposite of the program; it is a lot of statements and it is only conditions. It says if this happens, do this; if that happens, do that, and they are in no particular order. In some sense rule-based systems are very versatile because you dump them into a new situation and they react immediately and they don't mind being interrupted.

Footnotes

1. CYC Project - an artificial intelligence project that attempts to assemble a comprehensive ontology and database of everyday common sense knowledge, with the goal of enabling AI applications to perform human-like reasoning.

The project was started in 1984 by Doug Lenat as part of Microelectronics and Computer Technology Corporation. The name "Cyc" (from "encyclopedia", pronounced like psych) is a registered trademark owned by Cycorp, Inc. in Austin, Texas, a company run by Lenat and devoted to the development of Cyc.

http://en.wikipedia.org/wiki/Cyc July 15, 2008 2:46PM EST

2. Open Mind - Open Mind Common Sense is a knowledge acquisition system designed

to acquire commonsense knowledge from the general public over the web. We describe and evaluate our first fielded system, which enabled the construction of a 450,000 assertion commonsense knowledge base.

http://web.media.mit.edu/~push/ODBASE2002.pdf July 15, 2008 2:53PM EST

3. John McCarthy (September 4, 1927–) - Professor Emeritus (as of 2001 Jan 1) of Computer Science at Stanford University, a computer scientist; he coined the term "artificial intelligence".

http://www-formal.stanford.edu/jmc/ July 15, 2008 3:08PM EST

4. Edward Feigenbaum - a Professor of Computer Science and Co-Scientific Director of the Knowledge Systems Laboratory at Stanford University. Dr. Feigenbaum served as Chief Scientist of the United States Air Force from 1994 to 1997.

Professor Feigenbaum was Chairman of the Computer Science Department and Director of the Computer Center at Stanford University. Until 1992 Dr. Feigenbaum was Co-Principal Investigator of the national computer facility for applications of Artificial Intelligence to Medicine and Biology known as the SUMEX-AIM facility, established by NIH at Stanford University. He is the Past President of the American Association for Artificial Intelligence. He has served on the National Science Foundation Computer Science Advisory Board, an ARPA study committee for Information Science and Technology; and on the National Research Council's Computer Science and Technology Board. He has been a member of the Board of Regents of the National Library of Medicine.

http://ksl.stanford.edu/people/eaf/ July 15, 2008 3:34PM EST

1 2 3 4 next page>